CEDMAV Publications

2011

B. Summa, G. Scorzelli, M. Jiang, P.-T. Bremer, V. Pascucci.

“Interactive Editing of Massive Imagery Made Simple: Turning Atlanta into Atlantis,” In ACM Transactions on Graphics, Vol. 30, No. 2, pp. 7:1--7:13. April, 2011.

DOI: 10.1145/1944846.1944847

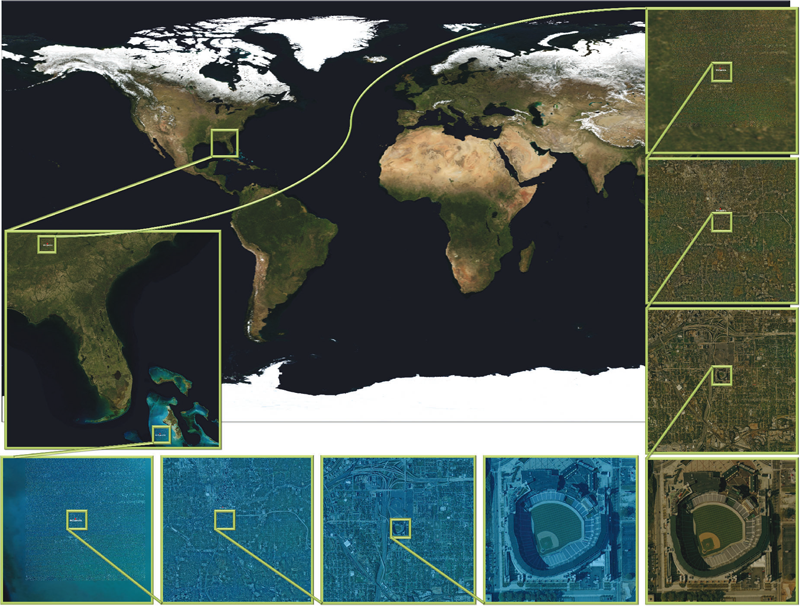

This article presents a simple framework for progressive processing of high-resolution images with minimal resources. We demonstrate this framework's effectiveness by implementing an adaptive, multi-resolution solver for gradient-based image processing that, for the first time, is capable of handling gigapixel imagery in real time. With our system, artists can use commodity hardware to interactively edit massive imagery and apply complex operators, such as seamless cloning, panorama stitching, and tone mapping.

We introduce a progressive Poisson solver that processes images in a purely coarse-to-fine manner, providing near instantaneous global approximations for interactive display (see Figure 1). We also allow for data-driven adaptive refinements to locally emulate the effects of a global solution. These techniques, combined with a fast, cache-friendly data access mechanism, allow the user to interactively explore and edit massive imagery, with the illusion of having a full solution at hand. In particular, we demonstrate the interactive modification of gigapixel panoramas that previously required extensive offline processing. Even with massive satellite images surpassing a hundred gigapixels in size, we enable repeated interactive editing in a dynamically changing environment. Images at these scales are significantly beyond the purview of previous methods yet are processed interactively using our techniques. Finally our system provides a robust and scalable out-of-core solver that consistently offers high-quality solutions while maintaining strict control over system resources.

D. Thompson, J.A. Levine, J.C. Bennett, P.-T. Bremer, A. Gyulassy, V. Pascucci, P.P. Pebay.

“Analysis of Large-Scale Scalar Data Using Hixels,” In Proceedings of the 2011 IEEE Symposium on Large-Scale Data Analysis and Visualization (LDAV), Providence, RI, pp. 23--30. 2011.

DOI: 10.1109/LDAV.2011.6092313

H.T. Vo, J. Bronson, B. Summa, J.L.D. Comba, J. Freire, B. Howe, V. Pascucci, C.T. Silva.

“Parallel Visualization on Large Clusters using MapReduce,” SCI Technical Report, No. UUSCI-2011-002, SCI Institute, University of Utah, 2011.

H.T. Vo, J. Bronson, B. Summa, J.L.D. Comba, J. Freire, B. Howe, V. Pascucci, C.T. Silva.

“Parallel Visualization on Large Clusters using MapReduce,” In Proceedings of the 2011 IEEE Symposium on Large-Scale Data Analysis and Visualization (LDAV), pp. 81--88. 2011.

Large-scale visualization systems are typically designed to efficiently \"push\" datasets through the graphics hardware. However, exploratory visualization systems are increasingly expected to support scalable data manipulation, restructuring, and querying capabilities in addition to core visualization algorithms. We posit that new emerging abstractions for parallel data processing, in particular computing clouds, can be leveraged to support large-scale data exploration through visualization. In this paper, we take a first step in evaluating the suitability of the MapReduce framework to implement large-scale visualization techniques. MapReduce is a lightweight, scalable, general-purpose parallel data processing framework increasingly popular in the context of cloud computing. Specifically, we implement and evaluate a representative suite of visualization tasks (mesh rendering, isosurface extraction, and mesh simplification) as MapReduce programs, and report quantitative performance results applying these algorithms to realistic datasets. For example, we perform isosurface extraction of up to l6 isovalues for volumes composed of 27 billion voxels, simplification of meshes with 30GBs of data and subsequent rendering with image resolutions up to 800002 pixels. Our results indicate that the parallel scalability, ease of use, ease of access to computing resources, and fault-tolerance of MapReduce offer a promising foundation for a combined data manipulation and data visualization system deployed in a public cloud or a local commodity cluster.

Keywords: MapReduce, Hadoop, cloud computing, large meshes, volume rendering, gigapixels

H.T. Vo, C.T. Silva, L.F. Scheidegger, V. Pascucci.

“Simple and Efficient Mesh Layout with Space-Filling Curves,” In Journal of Graphics, GPU, and Game Tools, pp. 25--39. 2011.

ISSN: 2151-237X

Bei Wang, B. Summa, V. Pascucci, M. Vejdemo-Johansson.

“Branching and Circular Features in High Dimensional Data,” SCI Technical Report, No. UUSCI-2011-005, SCI Institute, University of Utah, 2011.

Bei Wang, B. Summa, V. Pascucci, M. Vejdemo-Johansson.

“Branching and Circular Features in High Dimensional Data,” In IEEE Transactions of Visualization and Computer Graphics (TVCG), Vol. 17, No. 12, pp. 1902--1911. 2011.

DOI: 10.1109/TVCG.2011.177

PubMed ID: 22034307

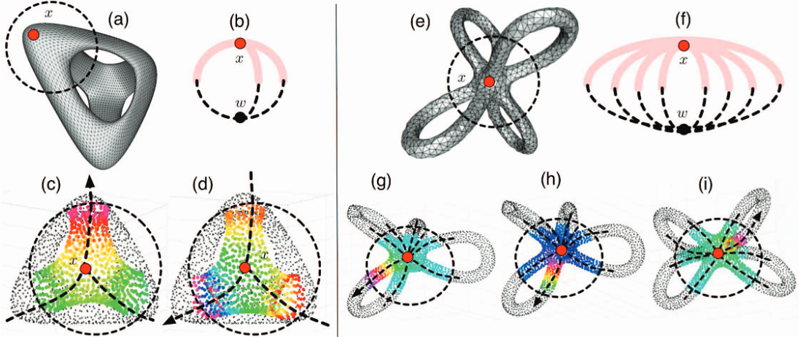

Large observations and simulations in scientific research give rise to high-dimensional data sets that present many challenges and opportunities in data analysis and visualization. Researchers in application domains such as engineering, computational biology, climate study, imaging and motion capture are faced with the problem of how to discover compact representations of high dimensional data while preserving their intrinsic structure. In many applications, the original data is projected onto low-dimensional space via dimensionality reduction techniques prior to modeling. One problem with this approach is that the projection step in the process can fail to preserve structure in the data that is only apparent in high dimensions. Conversely, such techniques may create structural illusions in the projection, implying structure not present in the original high-dimensional data. Our solution is to utilize topological techniques to recover important structures in high-dimensional data that contains non-trivial topology. Specifically, we are interested in high-dimensional branching structures. We construct local circle-valued coordinate functions to represent such features. Subsequently, we perform dimensionality reduction on the data while ensuring such structures are visually preserved. Additionally, we study the effects of global circular structures on visualizations. Our results reveal never-before-seen structures on real-world data sets from a variety of applications.

Keywords: Dimensionality reduction, circular coordinates, visualization, topological analysis

S. Williams, M. Petersen, P.-T. Bremer, M. Hecht, V. Pascucci, J. Ahrens, M. Hlawitschka, B. Hamann.

“Adaptive Extraction and Quantification of Geophysical Vortices,” In IEEE Transactions on Visualization and Computer Graphics, Proceedings of the 2011 IEEE Visualization Conference, Vol. 17, No. 12, pp. 2088--2095. 2011.

2010

M. Berger, L.G. Nonato, V. Pascucci, C.T. Silva.

“Fiedler Trees for Multiscale Surface Analysis,” In Computer & Graphics, Vol. 34, No. 3, Note: Special Issue of Sha, pp. 272--281. June, 2010.

DOI: 10.1016/j.cag.2010.03.009

In this work we introduce a new hierarchical surface decomposition method for multiscale analysis of surface meshes. In contrast to other multiresolution methods, our approach relies on spectral properties of the surface to build a binary hierarchical decomposition. Namely, we utilize the first nontrivial eigenfunction of the Laplace–Beltrami operator to recursively decompose the surface. For this reason we coin our surface decomposition the Fiedler tree. Using the Fiedler tree ensures a number of attractive properties, including: mesh-independent decomposition, well-formed and nearly equi-areal surface patches, and noise robustness. We show how the evenly distributed patches can be exploited for generating multiresolution high quality uniform meshes. Additionally, our decomposition permits a natural means for carrying out wavelet methods, resulting in an intuitive method for producing feature-sensitive meshes at multiple scales.

T. Etiene, L.G. Nonato, C.E. Scheidegger, J. Tierny, T.J. Peters, V. Pascucci, R.M. Kirby, C.T. Silva.

“Topology Verification for Isosurface Extraction,” SCI Technical Report, No. UUSCI-2010-003, SCI Institute, University of Utah, 2010.

S. Gerber, P.-T. Bremer, V. Pascucci, R.T. Whitaker.

“Visual Exploration of High Dimensional Scalar Functions,” In IEEE Transactions on Visualization and Computer Graphics, IEEE Transactions on Visualization and Computer Graphics, Vol. 16, No. 6, IEEE, pp. 1271--1280. Nov, 2010.

DOI: 10.1109/TVCG.2010.213

PubMed ID: 20975167

S. Jadhav, H. Bhatia, P.-T. Bremer, J.A. Levine, L.G. Nonato, V. Pascucci.

“Consistent Approximation of Local Flow Behavior for 2D Vector Fields using Edge Maps,” SCI Technical Report, No. UUSCI-2010-004, SCI Institute, University of Utah, 2010.

S. Kumar, V. Vishwanath, P. Carns, V. Pascucci, R. Latham, T. Peterka, M. Papka, R. Ross.

“Towards Efficient Access of Multi-dimensional, Multi-resolution Scientific Data,” In Proceedings of the 5th Petascale Data Storage Workshop, Supercomputing 2010, pp. (in press). 2010.

J. Tierny, J. Daniels II, L.G. Nonato, V. Pascucci, C.T. Silva.

“Interactive Quadrangulation with Reeb Atlases and Connectivity Textures,” SCI Technical Report, No. UUSCI-2010-006, SCI Institute, University of Utah, 2010.

H.T. Vo, D.K. Osmari, B. Summa, J.L.D. Comba, V. Pascucci, C.T. Silva.

“Streaming-Enabled Parallel Dataflow Architecture for Multicore Systems,” In Computer Graphics Forum, Vol. 29, No. 3, pp. 1073--1082. 2010.

H.T. Vo, D.K. Osmari, B. Summa, J.L.D. Comba, V. Pascucci, C.T. Silva.

“Streaming-Enabled Parallel Dataflow Architecture for Multicore Systems,” In Computer Graphics Forum, Vol. 29, No. 3, Wiley-Blackwell, pp. 1073--1082. Aug, 2010.

DOI: 10.1111/j.1467-8659.2009.01704.x

We propose a new framework design for exploiting multi-core architectures in the context of visualization dataflow systems. Recent hardware advancements have greatly increased the levels of parallelism available with all indications showing this trend will continue in the future. Existing visualization dataflow systems have attempted to take advantage of these new resources, though they still have a number of limitations when deployed on shared memory multi-core architectures. Ideally, visualization systems should be built on top of a parallel dataflow scheme that can optimally utilize CPUs and assign resources adaptively to pipeline elements. We propose the design of a flexible dataflow architecture aimed at addressing many of the shortcomings of existing systems including a unified execution model for both demand-driven and event-driven models; a resource scheduler that can automatically make decisions on how to allocate computing resources; and support for more general streaming data structures which include unstructured elements. We have implemented our system on top of VTK with backward compatibility. In this paper, we provide evidence of performance improvements on a number of applications.

2009

E.W. Bethel, C.R. Johnson, S. Ahern, J. Bell, P.-T. Bremer, H. Childs, E. Cormier-Michel, M. Day, E. Deines, P.T. Fogal, C. Garth, C.G.R. Geddes, H. Hagen, B. Hamann, C.D. Hansen, J. Jacobsen, K.I. Joy, J. Krüger, J. Meredith, P. Messmer, G. Ostrouchov, V. Pascucci, K. Potter, Prabhat, D. Pugmire, O. Rubel, A.R. Sanderson, C.T. Silva, D. Ushizima, G.H. Weber, B. Whitlock, K. Wu.

“Occam's Razor and Petascale Visual Data Analysis,” In Journal of Physics: Conference Series, Journal of Physics: Conference Series, Vol. 180, No. 012084, pp. (published online). 2009.

DOI: 10.1088/1742-6596/180/1/012084

One of the central challenges facing visualization research is how to effectively enable knowledge discovery. An effective approach will likely combine application architectures that are capable of running on today's largest platforms to address the challenges posed by large data with visual data analysis techniques that help find, represent, and effectively convey scientifically interesting features and phenomena.

C.D. Hansen, C.R. Johnson, V. Pascucci, C.T. Silva.

“Visualization for Data-Intensive Science,” In The Fourth Paradigm: Data-Intensive Science, Edited by S. Tansley and T. Hey and K. Tolle, Microsoft Research, pp. 153--164. 2009.

K. Potter, A. Wilson, P.-T. Bremer, D. Williams, C. Doutriaux, V. Pascucci, C.R. Johhson.

“Visualization of Uncertainty and Ensemble Data: Exploration of Climate Modeling and Weather Forecast Data with Integrated ViSUS-CDAT Systems,” In J. Phys.: Conf. Ser., Vol. 180, No. 012089, IOP Publishing, pp. 012089. July, 2009.

DOI: 10.1088/1742-6596/180/1/012089

K. Potter, A. Wilson, P.-T. Bremer, D. Williams, C. Doutriaux, V. Pascucci, C.R. Johnson.

“Ensemble-Vis: A Framework for the Statistical Visualization of Ensemble Data,” In Proceedings of the 2009 IEEE International Conference on Data Mining Workshops, pp. 233--240. 2009.